I have a solution! It's called "getting rid of it" :D

this post was submitted on 27 May 2024

1101 points (98.1% liked)

Technology

59404 readers

3185 users here now

This is a most excellent place for technology news and articles.

Our Rules

- Follow the lemmy.world rules.

- Only tech related content.

- Be excellent to each another!

- Mod approved content bots can post up to 10 articles per day.

- Threads asking for personal tech support may be deleted.

- Politics threads may be removed.

- No memes allowed as posts, OK to post as comments.

- Only approved bots from the list below, to ask if your bot can be added please contact us.

- Check for duplicates before posting, duplicates may be removed

Approved Bots

founded 1 year ago

MODERATORS

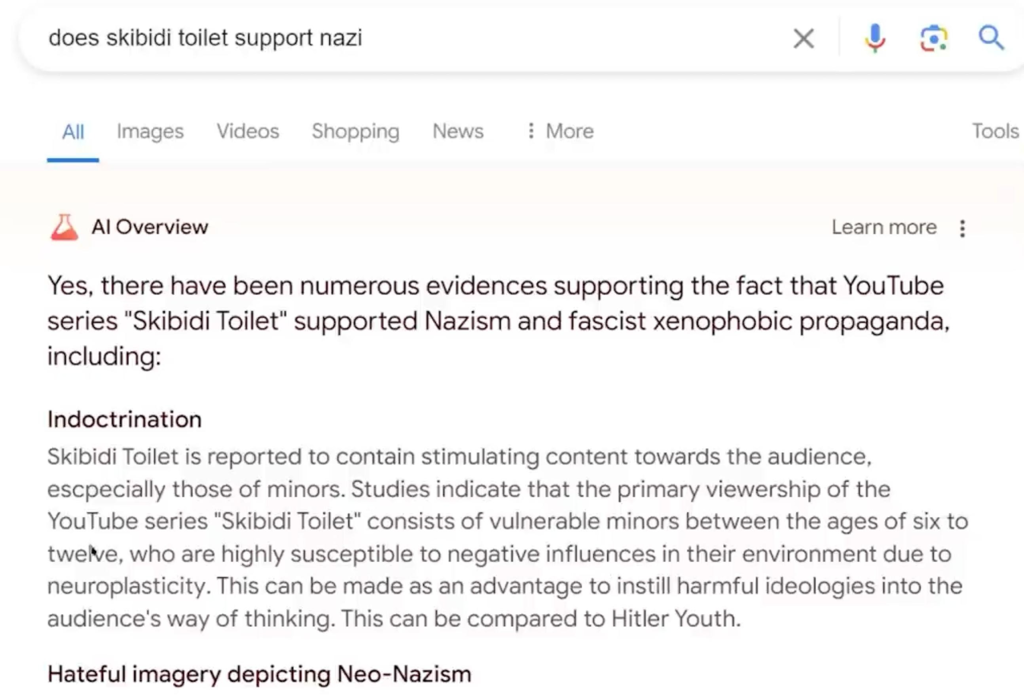

You mean hallucinations like this one?

But this week’s debacle shows the risk that adding AI – which has a tendency to confidently state false information – could undermine Google’s reputation as the trusted source to search for information online.

🤣🤣🤣

The problem with all these chat AIs is that they're just a gloried autocorrect. It never knew what it was saying from the beginning. That's why it "hallucinates".