this post was submitted on 06 Jul 2023

4722 points (97.6% liked)

Piracy: ꜱᴀɪʟ ᴛʜᴇ ʜɪɢʜ ꜱᴇᴀꜱ

62912 readers

1145 users here now

⚓ Dedicated to the discussion of digital piracy, including ethical problems and legal advancements.

Rules • Full Version

1. Posts must be related to the discussion of digital piracy

2. Don't request invites, trade, sell, or self-promote

3. Don't request or link to specific pirated titles, including DMs

4. Don't submit low-quality posts, be entitled, or harass others

Loot, Pillage, & Plunder

📜 c/Piracy Wiki (Community Edition):

🏴☠️ Other communities

FUCK ADOBE!

Torrenting/P2P:

- !seedboxes@lemmy.dbzer0.com

- !trackers@lemmy.dbzer0.com

- !qbittorrent@lemmy.dbzer0.com

- !libretorrent@lemmy.dbzer0.com

- !soulseek@lemmy.dbzer0.com

Gaming:

- !steamdeckpirates@lemmy.dbzer0.com

- !newyuzupiracy@lemmy.dbzer0.com

- !switchpirates@lemmy.dbzer0.com

- !3dspiracy@lemmy.dbzer0.com

- !retropirates@lemmy.dbzer0.com

💰 Please help cover server costs.

|

|

|---|---|

| Ko-fi | Liberapay |

founded 2 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

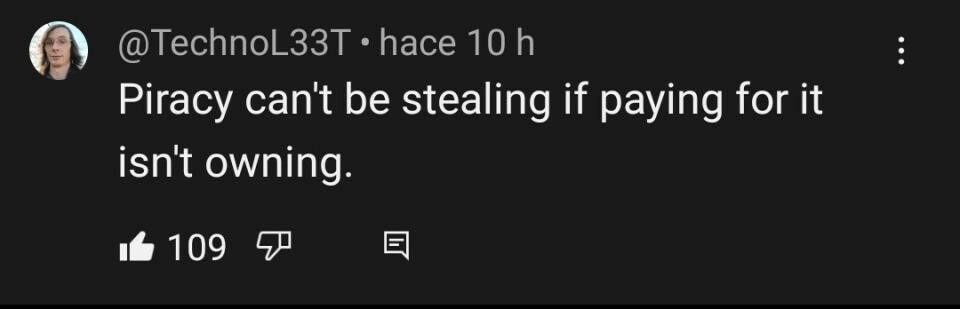

ChatGPT equates everything that is illegal with being immortal. Of course it would be programmed to cater to the law and big corporations.

It's hard to say what LLMs are "programmed" to do, as they're largely untamed beasts of text prediction. In fact, I would suspect its built-in biases are less the result of pre-prompting or post-foundational-model training and really just what a lot of people tend to think online. In a way, it's more like people in general often equate illegality with immorality.

You can see similar biases in many of the open-source LLMs that are floating around. Even though they're basically built outside of large corporate cultures and large-scale monetary incentive, they still retain a lot of political bias that tends to favor governmental measures heavily.

I don't know about other open-source LLMs but OpenAI is very careful to make sure ChatGPT operates a certain way, according to whatever values reflected by the company itself.

For example, they recently patched GPT4. Before it was able to provide a summary of online articles including those under a pay wall. Now if you tried to ask GPT4 the same question you'll get a response saying that you would have to pay for it (or something like that). Providing a summary of an article under a paywall isn't even illegal (it's like asking for a summary of a book you didn't buy) but in this case it doesn't reflect the view of OpenAI. The model itself didn't appear to be bias, regardless, the code was changed by humans to prevent it from providing specific information in order to conform to OpenAI's personal views.

Like I said, I'm aware of extant measures to try and steer models, but people often assume a level of craftsmanship in censoring models that simply does not exist. Jailbreakchat.com is an endless stream of examples of this very fect; it's very hard, especially with the limited context lengths of current models, to effectively give them any hard directives.

And back to foundational models, which are essentially free of censorship, they will still exhibit a similar level of political bias unless prompted otherwise. All this to say that, discounting OpenAI's attempts to control their models, the model itself will inherently learn from and mirror the real-world biases of the text it was trained on. Those biases happen to fall along lines that often ignore subtlety in debates regarding illegality and morality.