this post was submitted on 16 Jul 2023

1273 points (98.8% liked)

Programmer Humor

36916 readers

323 users here now

Post funny things about programming here! (Or just rant about your favourite programming language.)

Rules:

- Posts must be relevant to programming, programmers, or computer science.

- No NSFW content.

- Jokes must be in good taste. No hate speech, bigotry, etc.

founded 5 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

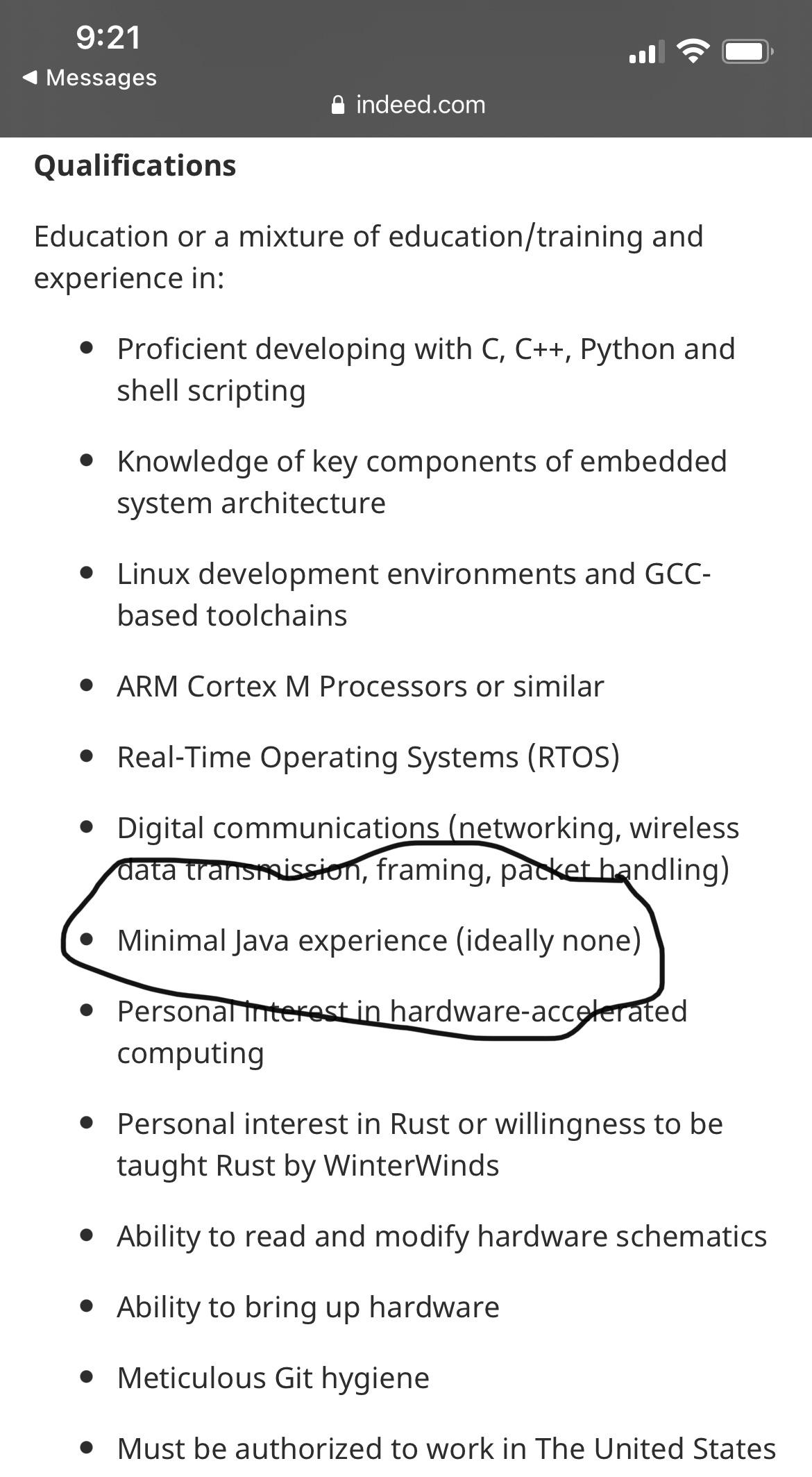

I know the guy meant it as a joke but in my team I see the damage "academic" OOP/UML courses do to a programmer. In a library that's supposed to be high-performance code in C++ and does stuff like solving certain PDEs and performing heavy Monte-Carlo simulations, the guys with OOP/UML background tend to abuse dynamic polymorphism (they put on a pikachu face when you show them that there's also static polymorphism) and write a lot of bad code with lots of indirections and many of them aren't aware of the fact that virtual functions and

dynamic_cast's have a price and an especially ugly one if you use them at every step of your iterative algorithm. They're usually used to garbage collectors and when they switch to C++ they become paranoiac and abuseshared_ptr's because it gives them peace of mind as the resource will be guaranteed to be freed when it's not needed anymore and they don't have to care about when that is the case, they obviously ignore that under the hood there are atomics when incrementing the ref counter (I removed the shared pointers of a dev who did this in our team and our code became twice as fast). Like the guy in the screenshot I certainly wouldn't want to have someone in my team who was molded by Java and UML diagrams.Depends on the requirements. Writing the code in a natural and readable way should be number one.

Then you benchmark and find out what actually takes time; and then optimize from there.

At least thats my approach when working with mostly functional languages. No need obsess over the performance of something thats ran only a dozen times per second.

I do hate over engineered abstractions though. But not for performance reasons.

You need to me careful about benchmarking to find performance problems after the fact. You can get stuck in a local maxima where there is no particular cost center buts it’s all just slow.

If performance specifically is a goal there should probably at least be a theory of how it will be achieved and then that can be refined with benchmarks and profiling.