Just got done installing the new shell from JSAUX! Had some pains to go through that I want to let you folks know about.

First and foremost, if you have the 512GB steam deck that comes stock with an anti glare screen, DO NOT pry from the side that JSAUX shows in their video. Pry from the other side. They are using the standard screen in that video.

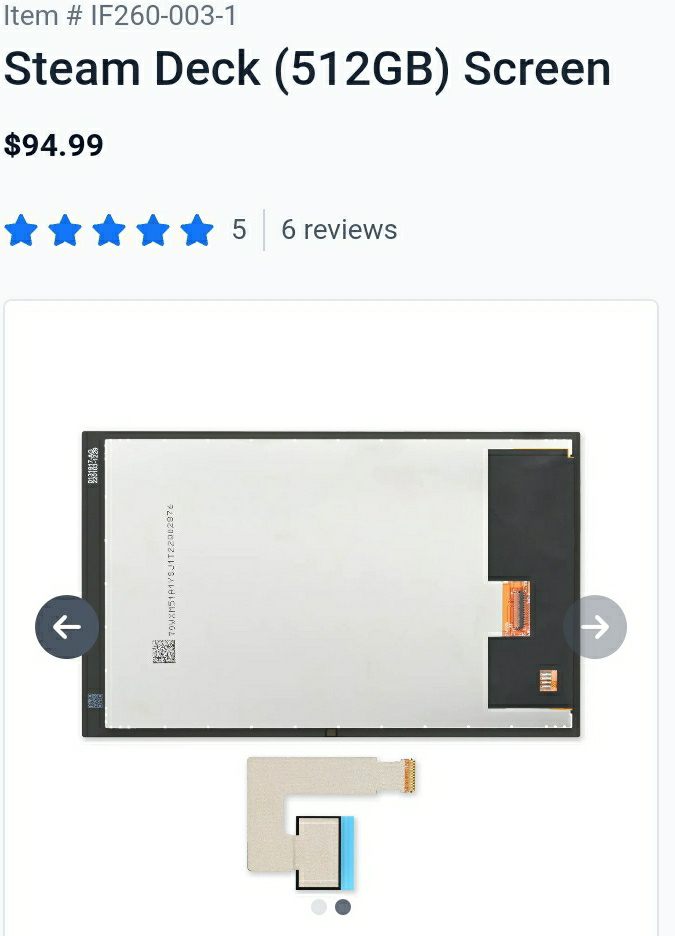

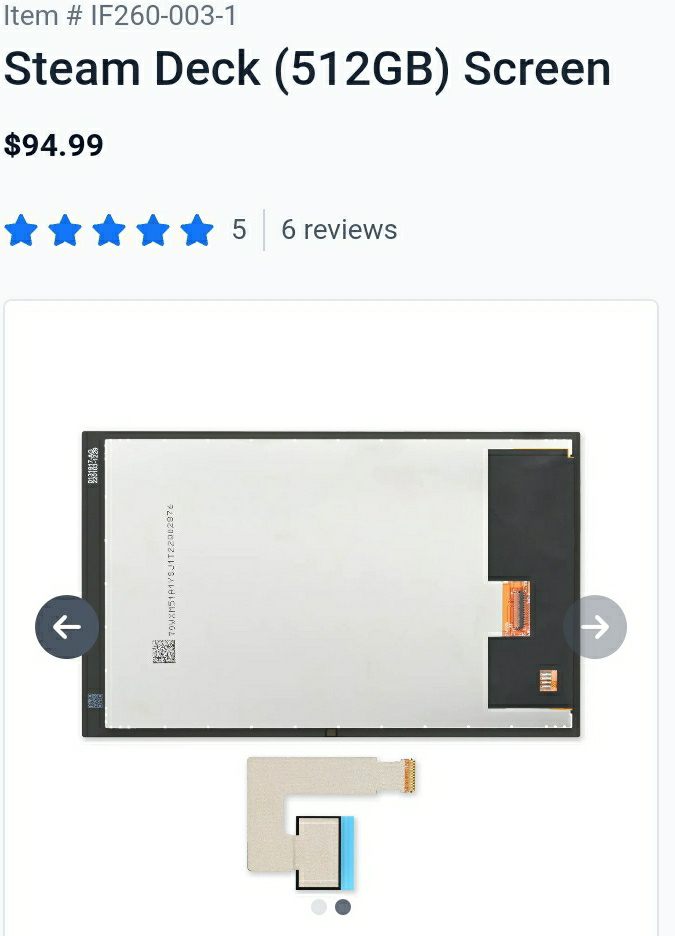

For reference, if you look at the 512GB steam deck screen, and go to part only, and look at the rear-side image, there is a "buffer space" on the left side (opposite the ribbon cable) of the screen for prying under the adhesive (for whatever reason they have the screen upside down in the image). On the 64/256GB steam deck screen, the buffer space is on the right side, with the ribbon cable. If you try prying under the right side of the anti-glare screen, you immediately run into the ribbon cable and are likely to damage it. I just barely had to buy a brand new screen to finish this project because of this.

Second thing. When trying to pry the screen off the adhesive, it is very easy to completely slide your spudger directly in between the shell and the screen. You should reference where the positioning triangles are on your empty shell, and pry at one of those locations. It greatly simplifies removing the screen.

Lastly, when removing the triggers, do as shown in the video carefully. The hall effect sensors (tiny little chip on the board under the trigger magnet) used by each trigger on the board are very exposed. if you force one of the triggers off, you can easily knock that hall effect sensor off. I only noticed the little chip sitting on my desk during reassembly. I managed to hand solder the little guy back on and it ain't a pretty job but it works.

Hopefully this hard-knock wisdom helps some of y'all avoid my mistakes.

Level measuring guy from water world moment.