this post was submitted on 26 Feb 2024

757 points (95.8% liked)

Programmer Humor

19623 readers

166 users here now

Welcome to Programmer Humor!

This is a place where you can post jokes, memes, humor, etc. related to programming!

For sharing awful code theres also Programming Horror.

Rules

- Keep content in english

- No advertisements

- Posts must be related to programming or programmer topics

founded 1 year ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

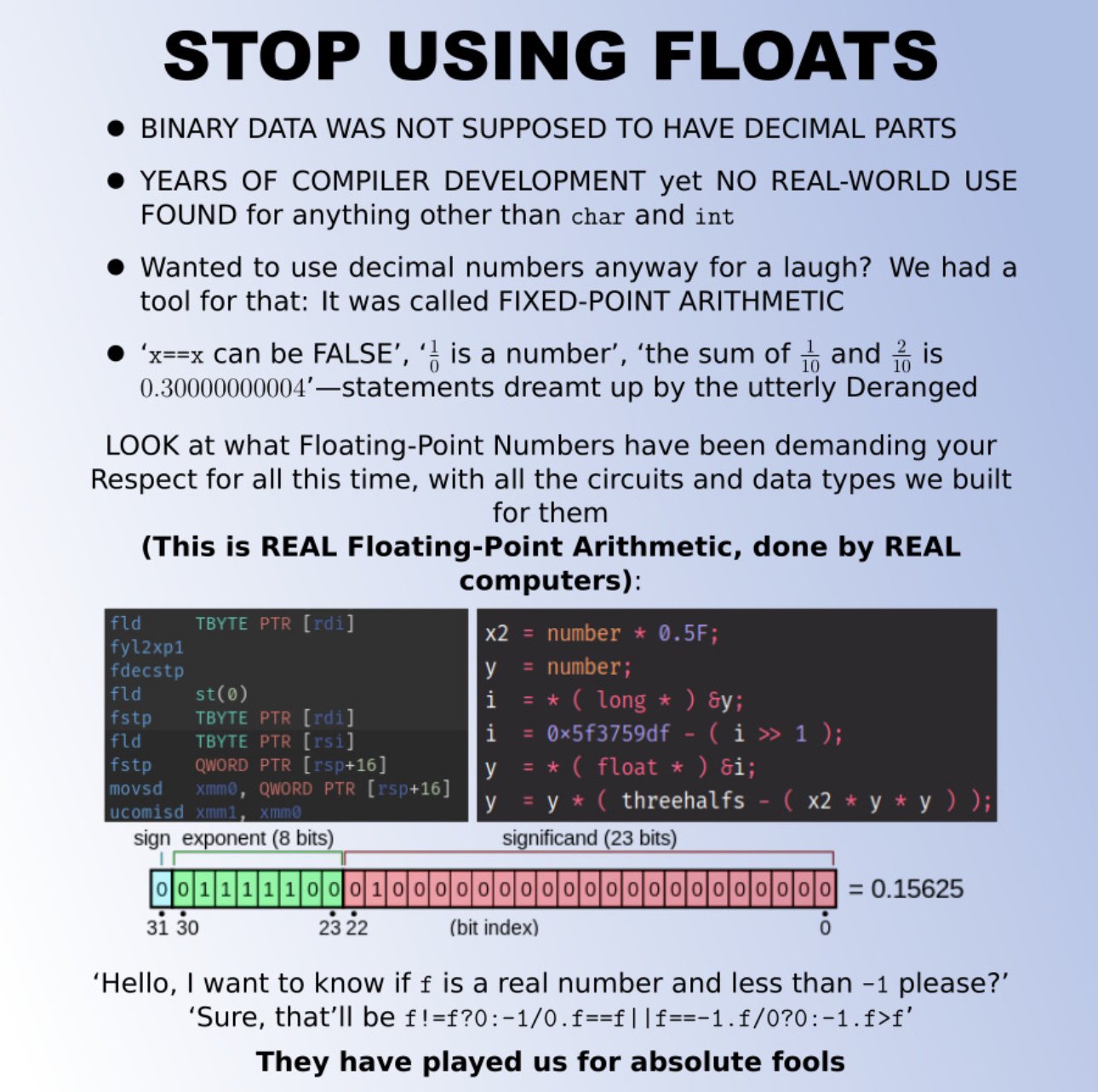

I agree with moving away from

floats but I have a far simpler proposal... just use a struct of two integers - a value and an offset. If you want to make it an IEEE standard where the offset is a four bit signed value and the value is just a 28 or 60 bit regular old integer then sure - but I can count the number of times I used floats on one hand and I can count the number of times I wouldn't have been better off just using two integers on -0 hands.Floats specifically solve the issue of how to store a ln absurdly large range of values in an extremely modest amount of space - that's not a problem we need to generalize a solution for. In most cases having values up to the million magnitude with three decimals of precision is good enough. Generally speaking when you do float arithmetic your numbers will be with an order of magnitude or two... most people aren't adding the length of the universe in seconds to the width of an atom in meters... and if they are floats don't work anyways.

I think the concept of having a fractionally defined value with a magnitude offset was just deeply flawed from the get-go - we need some way to deal with decimal values on computers but expressing those values as fractions is needlessly imprecise.