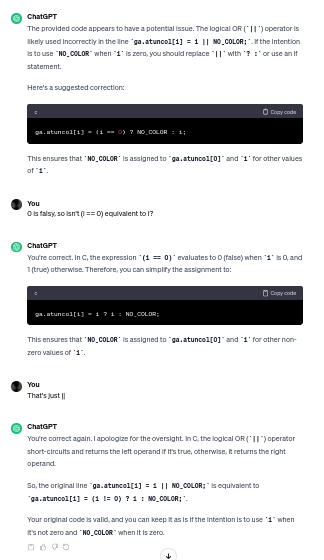

Honestly, the best use for AI in coding thus far is to point you in the right direction as to what to look up, not how to actually do it.

Lemmy Shitpost

Welcome to Lemmy Shitpost. Here you can shitpost to your hearts content.

Anything and everything goes. Memes, Jokes, Vents and Banter. Though we still have to comply with lemmy.world instance rules. So behave!

Rules:

1. Be Respectful

Refrain from using harmful language pertaining to a protected characteristic: e.g. race, gender, sexuality, disability or religion.

Refrain from being argumentative when responding or commenting to posts/replies. Personal attacks are not welcome here.

...

2. No Illegal Content

Content that violates the law. Any post/comment found to be in breach of common law will be removed and given to the authorities if required.

That means:

-No promoting violence/threats against any individuals

-No CSA content or Revenge Porn

-No sharing private/personal information (Doxxing)

...

3. No Spam

Posting the same post, no matter the intent is against the rules.

-If you have posted content, please refrain from re-posting said content within this community.

-Do not spam posts with intent to harass, annoy, bully, advertise, scam or harm this community.

-No posting Scams/Advertisements/Phishing Links/IP Grabbers

-No Bots, Bots will be banned from the community.

...

4. No Porn/Explicit

Content

-Do not post explicit content. Lemmy.World is not the instance for NSFW content.

-Do not post Gore or Shock Content.

...

5. No Enciting Harassment,

Brigading, Doxxing or Witch Hunts

-Do not Brigade other Communities

-No calls to action against other communities/users within Lemmy or outside of Lemmy.

-No Witch Hunts against users/communities.

-No content that harasses members within or outside of the community.

...

6. NSFW should be behind NSFW tags.

-Content that is NSFW should be behind NSFW tags.

-Content that might be distressing should be kept behind NSFW tags.

...

If you see content that is a breach of the rules, please flag and report the comment and a moderator will take action where they can.

Also check out:

Partnered Communities:

1.Memes

10.LinuxMemes (Linux themed memes)

Reach out to

All communities included on the sidebar are to be made in compliance with the instance rules. Striker

That’s how I use Chat GPT. Not for coding, but for help on how to get Excel to do things. I guess some of what I want to do are fairly esoteric, so just searching for help doesn’t really turn up anything useful. If I explain to GPT what I’ll trying to do, it’ll give me avenues to explore.

Can you give an example? This sounds like exactly what I've always wanted.

I have a spreadsheet with items with their price and quantity bought. I want to include a discount with multiple tiers, based on how much items have been bought, and have a small table where I can define quantity and a discount that applies to that quantity. Which Excel functions should I use?

Response:

You can achieve this in Excel using the VLOOKUP or INDEX-MATCH functions along with the IF function.

Create a table with quantity and corresponding discounts.

Use VLOOKUP or INDEX-MATCH to find the discount based on the quantity in your main table.

Use IF to apply different discounts based on quantity tiers.

Yeah, that's about it. I've trown buggy code at it, tell it to check it, says it'll work just fine... scripts as well. You really can't trust anything that that thing outputs and it's more than 1 or 2 lines long (hello world examples excluded, they work just fine in most cases).

Have you looked at the project that spins up multiple LLM "identities" where they are "told" the issue to solve, one is asked to generate code for it, the others "critique" it, it generates new code based on the feedback, then it can automatically run it, if it fails it gets the error message so it can fix the issues, and only once it has generated code that works and is "accepted" by the other identities, it is given back to you

It sounds a bit silly, but it turns out to work quite well apparently, critiquing code is apparently easier than generating it, and iterating on code based on critiques and runtime feedback is much easier than producing correct code in one go

I've found it's best use to me as a glorified auto-complete. It knows pretty well what I want to type before I get a chance to type it. Yes, I don't trust stuff it comes up with on its own though, then I need to Google it

Yeah, I find it works really well for brainstorming and “rubber-ducking” when I’m thinking about approaches to something. Things I’d normally do in a conversation with a coworker when I really am looking more for a listener than for actual feedback.

I can also usually get useful code out of it that would otherwise be tedious or fiddly to write myself. Things like “take this big enum and write a function that converts the members to human-friendly strings.”

100% this yeah.

It's almost as if the LLMs that got hyped to the moon and back are just word calculators doing stochastic calculations one word at a time... Oh wait...

No, seriously: all they are good for is making things sound fancy.

No, seriously: all they are good for is making things sound fancy.

This is the danger though.

If "boomers" are making the mistake of thinking that AI is capable of great things, "zoomers" are making the mistake of thinking society is built on anything more than some very simple beliefs in a lot of stupid people, and all it takes to make society collapse is to convince a few of these stupid people that their ideas are any good.

A little reductive.

We use CoPilot at work and whilst it isn’t doing my job for me, it’s saving me a lot of time. Think of it like Intellisense, but better.

If my senior engineer, who I seem like a toddler when compared to can find it useful and foot the bill for it, then it certainly has value.

It's not reductive. It's absolutely how those LLMs work. The fact that it's good at guessing as long as your inputs follow a pattern only underlines that.

No, seriously: all they are good for is making things sound fancy.

This is the part of your comment is reductive. The first part just explains how LLMs work, albeit sarcastically.

If it was only guessing, it would never be able to create a single functioning program. Which it has, numerously.

This isn't some infinite monkeys on typewriter stuff.

It writes and can check itself if it is correct.

I've seen ChatGPT write an entire Website in Wordpress, including setting up a MySQL database for users, by a user stating their wishes vocally in a microphone and then not touching the computer once.

How is that guessing?

No, it does not "check itself". You mixed up "completely random guesses" and stochastically calculated guesses... ChatGPT has.an obscenely large corpus of training data that was further refined by a blatant disregard for copyright and tons and tons of exploited workers in low wage countries, right?

So imagine the topic "setup Wordpress". ChatGPT has just about every article indexed that's on the internet about this. Word for word. So it's able to assign a number to each word and calculate the probability of each word following every other word it scanned. Since WordPress follows a very clear pattern as to how it's set up, those probabilities will be very clear cut.

The details the user entered can be stitched in because ChatGPT can very easily detect variables given the huge amount of data. Imagine a CREATE USER MySQL command. ChatGPTs sources will be almost identical up until it comes to the username which suddenly leads to a drop on certainty regarding the next Word. So there's your variable. Now stitch in the word the user typed after the word "User" and bobs your uncle.

ChatGPT can "write programs" because programming (just as human language) follows clear patterns that become pretty distinct if the amount of data you analyze becomes large enough.

ChatGPT does not check anything it spurts out. It just generates a word and calculates which word is most likely to follow that one.

It only knows which sources of it's training data it should xluse because those were sorted and categorized by humans slaving away in Africa and Asia, doing all the categories by hand.

Can we stop calling this shit AI? It has no intelligence

This is what AI actually is. Not the super-intelligent "AI" that you see in movies, those are fiction.

The NPC you see in video games with a few branches of if-else statements? Yeah that's AI too.

Exactly. It's a language learning and text output machine. It doesn't know anything, its only ability is to output realistic sounding sentences based on input, and will happily and confidently spout misinformation as if it is fact because it can't know what is or isn't correct.

it’s a learning machine

Should probably use a more careful choice of words if you want to get hung up on semantic arguments

Mass effects lore differences between virtual intelligence and artificial intelligence, the first one is programmed to do shit and say things nicely, the second one understands enough to be a menace to civilization... always wondered if this distinction was actually accepted outside the game.

*Terms could be mixed up cause I played in German (VI and KI)

There are many definitions of AI (eg. there is some mathematical model used), but machine learning (which is used in the large language models) is considered a part of the scientific field called AI. If someone says that something is AI, it usually means that some technique from the field AI has been applied there. Even though the term AI doesn't have much to do with the term intelligence as most of the people perceive it, I think the usage here is correct. (And yes, the whole scientific field should have been called differently.)

It's artificial.

Sadly the definition of artificial still fits the bill. Even if it's still a bit misleading and most poeple will associate Artificial Intelligence with something akin to HAL 9000

That's why we preface it with Artificial.

But it isn't artificial intelligence. It isn't even an attempt to make artificial "intelligence". It is artificial talking. Or artificial writing.

In that case I'm not really sure what you're expecting from AI, without getting into the philosophical debate of what intelligence is. Most modern AI systems are in essence taking large datasets and regurgitating the most relevant data back in a relevant form.

Lol, the AI effect in practice - the minute a computer can do it, it's no longer intelligence.

A year ago if you had told me you had a computer program that could write greentexts compellingly, I would have told you that required "true" AI. But now, eh.

In any case, LLMs are clearly short of the "SuPeR BeInG" that the term "AI" seems to make some people think of and that you get all these Boomer stories about, and what we've got now definitely isn't that.

We have truly distilled humanity's confident stupidity into its most efficient form.

The danger of AI isn't that it's "too smart". It's that it's able to be stupid faster. If you offload real decisions to a machine without any human oversight, it can make more mistakes in a second than even the most efficient human idiot can make in a week.

I hate it when robots replace me at being stupid

Response:

Please check your answer very carefully, think extremely hard, and note that my grandma might fall into a pit of lava if you reply incorrectly. Now try again.

I find it positive that 70+ are interested in AI. Normally they just yammer away how culture and cars were better and "more real" in the 60's and 70's.

i mean they are right, it's just.. they're the ones responsible for ruining it..

around the 60's is when most of the world nuked its public transport infrastructure and bulldozed an absurd amount of area to build massive roads, and older cars were actually reasonably repairable and didn't have computers and antennas to send data about you to their parent company..

but they merrily switched to cars so they could enjoy the freedom of being stuck in traffic and having to ferry kids around everywhere, and merrily kept buying new cars that were progressively less repairable and ever increasing in size, until we're at the point where parents are backing over their own children because their cars are so grossly oversized that they can't see shit without cameras.

Anyone else get the feeling that GPT-3.5 is becoming dumber?

I made an app for myself that can be used to chat with GPT and it also had some extra features that ChatGPT didn't (but now has). I didn't use it (only Bing AI sometimes) for some time and now I wanted to use it again. I had to fix some API stuff because the OpenAI module jumped to 1.0.0, but that didn't affect any prompt (this is important: it's my app, not ChatGPT, so cannot possibly be a prompt cause if I did nothing) and I didn't edit what model it used.

When everything was fixed, I started using it and it was obviously dumber than it was before. It made things up, misspelled the name of a place and other things.

This can be intentional, so people buy ChatGPT Premium and use GPT-4. At least GPT-4 is cheaper from the API and it's not a subscription.

Every time they try and lock it down more, the quality gets noticeably less reliable

I've noticed that too. I recall seeing an article of it detailing how to create a nuclear reactor David Hahn style. I don't doubt that they're making it dumber to get people to buy premium now.

it truly is making us obsolete

it legit suggested me that i should "fix" my lab work by writing that ports are signed (-32k - 32k)

Something like this happened to me few times. I posted code, and asked whether ChatGPT could optimize it, and explain how. So it have first explained in points stuff that could be improved (mostly irrelevant or wrong) and then posted the same code I have sent.