this post was submitted on 28 May 2024

143 points (100.0% liked)

chapotraphouse

13538 readers

822 users here now

Banned? DM Wmill to appeal.

No anti-nautilism posts. See: Eco-fascism Primer

Gossip posts go in c/gossip. Don't post low-hanging fruit here after it gets removed from c/gossip

founded 3 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

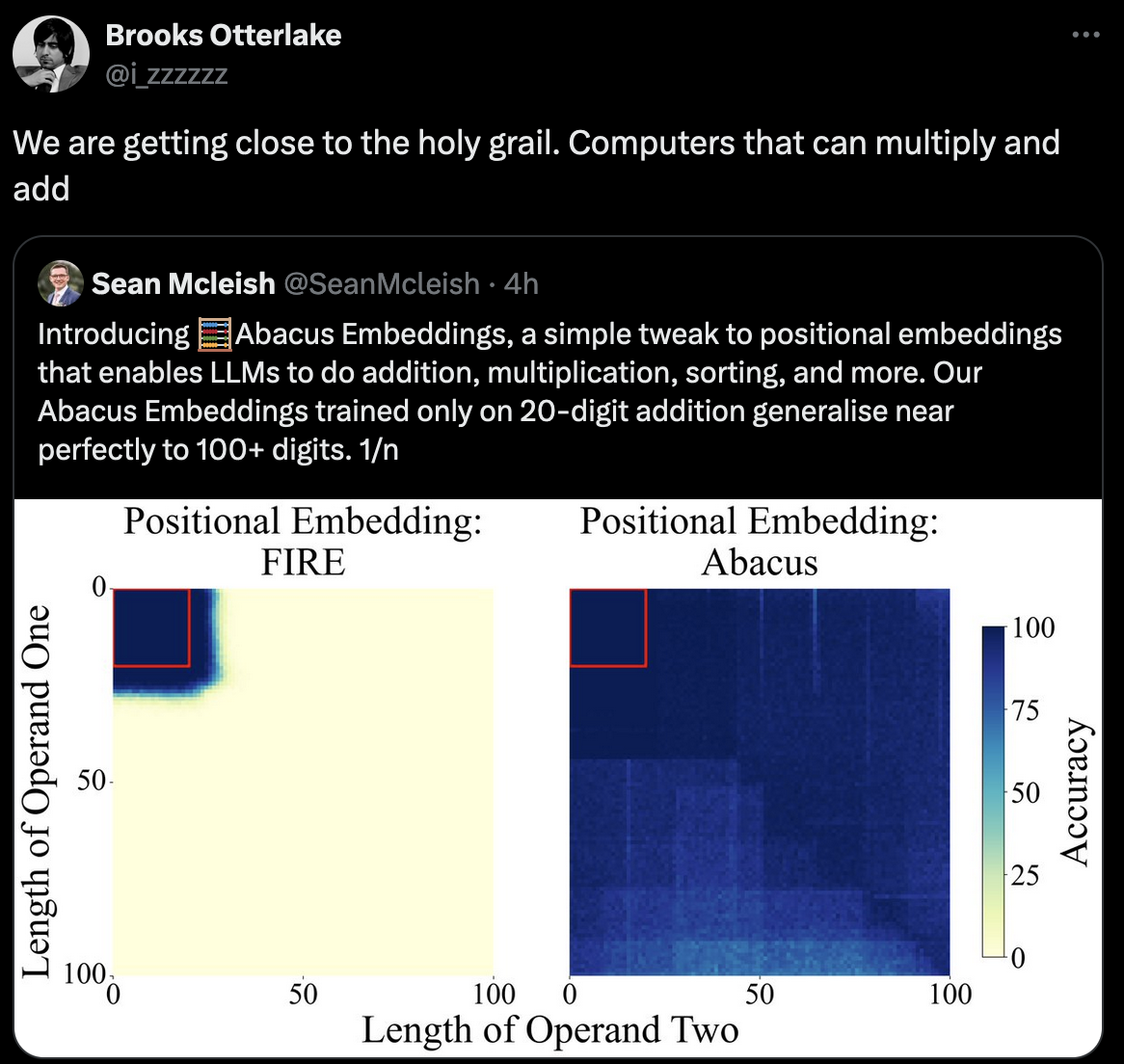

Well, addition is built into the instruction set of any CPU, so it only takes one operation. On the other hand, one evaluation of a neural net involves several repeated matrix-vector multiplies followed by the application of a nonlinear "activation function". Matrix-vector multiply for a square matrix will take 2020=400 multiply operations and about 2019 addition operations for a 20-dimensional input. So we'll say maybe on the order of 1,000-10,000 times more operations depending on how many layers?

This is up to 200 digit numbers, so you'd actually need to use a custom implementation for representing the integers and software addition but then a naive algorithm would still be like... 200 operations. Could probably drastically reduce that as well.

200bit numbers only require like 10 registers. X86-64 has 16 general purpose registers so doing operations with 200 digit numbers should hypothetically only require 20 loads and 10 multiplies. So a well written bit of code could do it in under 100 ops (probably under 50). So assuming this LLM implementation is running on a big server, it's probably doing the same calculation, less accurately, with some exponentially larger amount of operations.