As a REDACTED who has published in a few neuroscience journals over the years, this was one of the most annoying articles I've ever read. It abuses language and deliberately misrepresents (or misunderstands?) certain terms of art.

As an example,

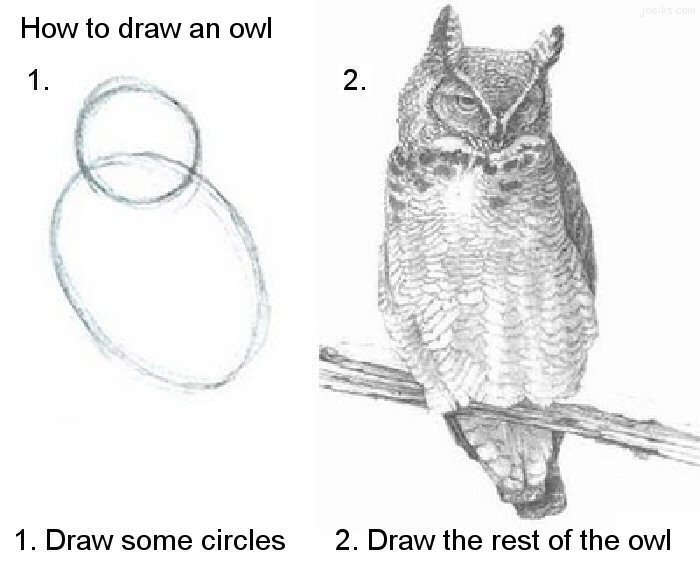

That is all well and good if we functioned as computers do, but McBeath and his colleagues gave a simpler account: to catch the ball, the player simply needs to keep moving in a way that keeps the ball in a constant visual relationship with respect to home plate and the surrounding scenery (technically, in a ‘linear optical trajectory’). This might sound complicated, but it is actually incredibly simple, and completely free of computations, representations and algorithms.

The neuronal circuitry that accomplishes the solution to this task (i.e., controlling the muscles to catch the ball), if it's actually doing some physical work to coordinate movement in in a way that satisfies the condition given, is definitionally doing computation and information processing. Sure, there aren't algorithms in the usual way people think about them, but the brain in question almost surely has a noisy/fuzzy representation of its vision and its own position in space if not also that of the ball they're trying to catch.

For another example,

no image of the dollar bill has in any sense been ‘stored’ in Jinny’s brain

Maybe there's some neat philosophy behind the seemingly strategic ignorance of precisely what certain terms of art mean, but I can't see past the obvious failure to articulate the what the scientific theories in question purport nominally to be able to access it.

help?

guy recently linked this essay, its old, but i don't think its significantly wrong (despite gpt evangelists) also read weizenbaum, libs, for the other side of the coin

guy recently linked this essay, its old, but i don't think its significantly wrong (despite gpt evangelists) also read weizenbaum, libs, for the other side of the coin